« I note that after much hue and cry, and many arguments, I still do not know what color this bikeshed will be.

I feel I have been informed of the many examples of problems with colors, cultural relevance of specific hues, details of paint techniques, anecdotes of past experiences with varying colors, larger socio-economic issues reflected through color choices, philosophy of colors, philosophy *about* the philosophy of color, legal and moral issues confronted during color evaluation, the impact of other bikeshed color choices, and how specific colors (and patterns) are under-represented, the finer details of paint application personel selection, and how certain colors are representative of larger social issues being played out in microcosms in individual environments…

….but I still do not know what color this bikeshed will be.

Please advise. »

Category: LAMP

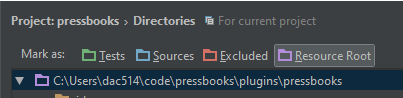

Fix CSS Path Errors by Setting Resource Root Folders In PHPStorm

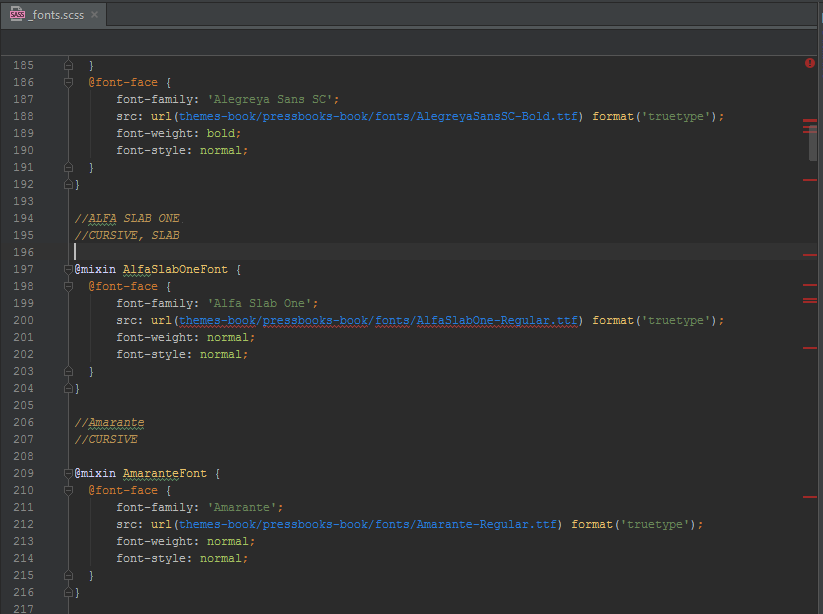

While developing a plugin for WordPress I was having trouble linting CSS files in PHPStorm. One file in particular was giving hundreds of false positives for errors related to paths:

This bothered me. I wanted to fix the reported errors but the reporting was wrong. Most of the time there was nothing to fix. After much fiddling I discovered files under a folder marked as Resource Root can be referenced as relative:

Low and behold the errors became real! Oh crud, time to fix.

Source:

https://www.jetbrains.com/phpstorm/help/configuring-folders-within-a-content-root.html

Setting up Coveralls.io (with Travis CI and PHPUnit)

Step 1)

Register you GitHub repo with Travis CI and Coveralls.IO.

Step 2)

In your .travis.yml file, add:

before_install: - composer require phpunit/phpunit:4.8.* satooshi/php-coveralls:dev-master - composer install --dev script: - ./vendor/bin/phpunit --coverage-clover ./tests/logs/clover.xml after_script: - php vendor/bin/coveralls -v

Where:

before_install: Calls composer and installs PHPUnit 4.8.* + satooshi/php-coveralls.script: Calls the installed version of PHPUnit and generates a clover.xml file in./tests/logs/clover.xml. (This XML file will be used by PHP-Coveralls.)after_script: Launches satooshi/php-coveralls in verbose mode.

Step 3)

Create a .coveralls.yml file that looks like:

coverage_clover: tests/logs/clover.xml json_path: tests/logs/coveralls-upload.json service_name: travis-ci

Where:

coverage_clover: Is the path to the PHPUnit generatedclover.xml.json_path: Is where to output ajson_filethat will be uploaded to the Coveralls API.service_name: Use eithertravis-ciortravis-pro.

Step 4)

Add badges to your GitHub README.md file.

[](https://travis-ci.org/NAMESPACE/REPO) [](https://coveralls.io/github/NAMESPACE/REPO?branch=master)

Replace NAMESPACE and REPO to match your GitHub repo.

PHP Configuration Files Rant

Let’s start with a joke. This GitHub repository:

“It’s funny ’cause it’s true” -Homer Simpson

Text configuration files (XML, Yaml, JSON, INI, …) work when the configuration is read once, the software persists in memory, and the application doesn’t exit until the user is done.

This is not what PHP does best. Sure PHP also reads the configuration file “once” but the fundamental difference is that PHP starts and exits dozens, maybe hundreds, of times for a single user using a single application.

The metaphorical equivalent would be relaunching World Of Warcraft every time time a user clicks on something.

I know some of you are thinking “Well that’s dumb. I wrote a game in PHP and the webpage isn’t reloading every time the user clicks…” but break that down: you wrote PHP which renders something the user is experiencing in a web-browser (C++) that may or may not be making Ajaxy calls back to the server (JavaScript) and PHP’s role in this solution is always to start-up, process data, return data, then exit.

For PHP to be the right tool for the right job, it has to be fast. Fast for developers to develop in *and* also fast for end users. (Hooray for PHP7!)

Some clever devs get around configuration performance problems by adding extra steps such as transpiling text into pure PHP before deploying, but do these complicated solutions really serve the PHP developer and the underlying philosophy of how we write code? When it comes to PHP there is a nuanced difference between “performance” and “fast.”

Let’s talk about JSON.

JSON, a “text only” and “language independent” data-interchange format, is currently the cool kid on the block, but from the perspective of JavaScript?

var json = { "this": "is", "valid": "javascript" };

Wow. Talk about language independence. No reprocessing!

The equivalent in PHP:

$php = [ 'this' => 'is', 'valid' => 'php' ];

Tada! No overhead of having to validate, process, and convert to PHP. Is it uglier? Debatable.

To be clear: XML, Yaml, JSON, and friends are fine as documents or as data to be processed by PHP. This is totally normal and sometimes even useful. 😉 Barring that, any reasonable PHP developer must conclude that configuration files cannot be a bottleneck. Not a bottleneck for speed of delivering shippable code, nor a bottleneck for acceptable performance. When choosing anything other than native PHP for configuration you are making a trade-off. Is the trade off worth it? The answer is always no. 1

But the secretary needs to be able to edit the app config live on the server and PHP is too hard for him!

No.

But caching! But Transpiling!

No.

But I like coding parsers!

Cool! Use your powers for docs and data, not PHP configs.

But I secretly want to be a JavaScript, Python, Ruby, C#, Java or anything but a PHP developer!

Uhhh, OK?

[1] Unless you are storing your PHP configs in Apache or Nginx as ENV variables. Then to you madam or sir, I bow down.

Get cwRsync Working With Vagrant On Windows 10

Getting cwRsync to work with Vagrant on Windows 10 is a pain.

This tutorial is for people who have:

- Installed Vagrant (Currently 1.7.4)

- Installed cwRsync Free Edition. (Currently 5.4.1)

- Installed Git for Windows. (Currently 2.5.3)

Reading comprehension 101:

cwRsync is a standalone version of rsync for Windows that doesn’t require Cygwin to be installed. I don’t have Cygwin installed because Git For Windows includes Git Bash and this is “good enough.” With a regular standalone cwRsync installation Cygwin will never be in the PATH and Vagrant will never add the required /cygdrive prefix.

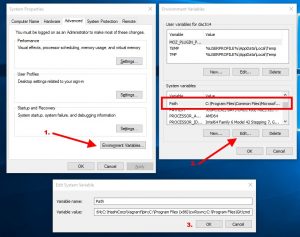

Howto fix:

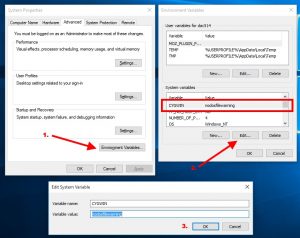

Add C:Program Files (x86)cwRsync (or wherever you installed) to your path. To avoid problems make sure this string is placed before C:Program FilesGitcmd and/or C:Program FilesGitmingw64bin;C:Program FilesGitusrbin

Add the following system variable: CYGWIN = nodosfilewarning

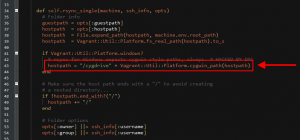

Edit (hack!)C:HashiCorpVagrantembeddedgemsgemsvagrant-1.7.4pluginssynced_foldersrsynchelper.rb

Change line ~43 from:

hostpath = Vagrant::Util::Platform.cygwin_path(hostpath)

To:

hostpath = "/cygdrive" + Vagrant::Util::Platform.cygwin_path(hostpath)

Restart your shells to apply changes. Fiddle with your Vagrantfile. Tada!

Troubleshooting:

Git for Windows is based on MinGw. cwRsync is based on Cygwin. You cannot run Vagrant & cwRsync from Git Bash because cwRsync includes it’s own incompatible SSH binary. If you try you will get the following error:

rsync error: error in rsync protocol data stream (code 12) at io.c(226) [Receive r=3.1.0]

Instead, when launching Vagrant use Microsoft PowerShell.

Sources:

[1] http://auxmem.com/2010/03/17/how-to-squelch-the-cygwin-dos-path-warning/

[2] https://github.com/mitchellh/vagrant/issues/3230

[3] https://github.com/mitchellh/vagrant/issues/4586

WP Memcached Object Cache Leaks Memory?

While working on Pressbooks, a multi-site WordPress based web application, I noticed that some of our customers were getting blank pages in the admin section. Specifically, customers with a lot of Sites (or Books as they are known in Pressbooks).

Checking the error logs I saw that these customers were running out of memory.

PHP Fatal error: Allowed memory size of 268435456 bytes exhausted (tried to allocate 292913 bytes) in /path/to/object-cache.php on line 212.

First, to temporarily stop the out-of-memory problem so I could profile I added the following to wp-config.php:

define( 'WP_MAX_MEMORY_LIMIT', '512M' );

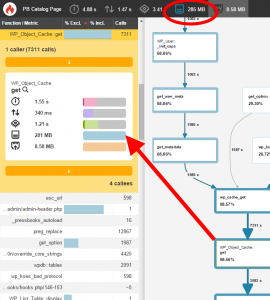

Next, using Blackfire.IO I was able to determine the following:

That is, when a customer was looking at their dashboard, PHP was consuming 285MB of memory. Most of it the Memcached Object Cache plugin.

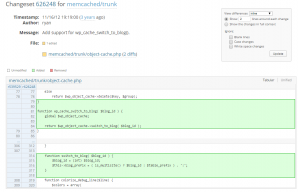

That’s weird. I’m using the latest version of the plugin, the plugin is developed by core developers, and no one has reported this before? Or so I thought! Browsing the plugin SVN I see the following change committed to trunk:

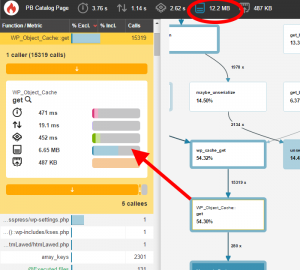

There’s a few more fixes in there as well. After installing the TRUNK version of this plugin Blackfire.IO displayed:

That’s a 273MB improvement!

It took me days to figure out this problem. It would have saved me a lot of time had I seen the new code first.

Bonus info:

- The code in TRUNK has at least 2 bugs. (…just load the file in PHPStorm and the errors will be underlined in red)

- Redis Object Cache gives better results.

For now, this is good enough.

Top 3 LAMP Developer Frustrations Switching From Linux/OSX to Windows 10

Two weeks ago I drank the cool-aid and switched my laptop to Windows 10.

I haven’t used Windows on my personal computer for twelve years. This is a big deal for me. I ran Ubuntu and OSX before that.

As a LAMP developer the switch has been painful. Here are my top 3 pain points:

SSH:

PuTTY , an app released in 1998, is still the best option for SSH on Windows. Actually, KiTTY is but you need to run PuTTy tools like Pageant or Key Generator do anything useful. I spend too much time painstakingly converting perfectly good SSH keys into strange PPK files. I squint click through a tree of options to do the most basic of tasks like login without password.

A better SSH for Windows might be GIT-SCM. When you install this you get Git Bash which has SSH. To be honest the Git Bash terminal is open 100% of the time I am sitting at my desktop. An unfortunate island of isolation that my other Windows tools are constantly fighting against…

SSH toolkits are BSD licensed. The fact that Microsoft hasn’t included SSH in Powershell by now is simply unacceptable. If Microsoft seriously wants web developers checking out Windows 10 then this is the biggest road block or, more to the point, this is the road that will lead me back to Linux when I can’t take it anymore.

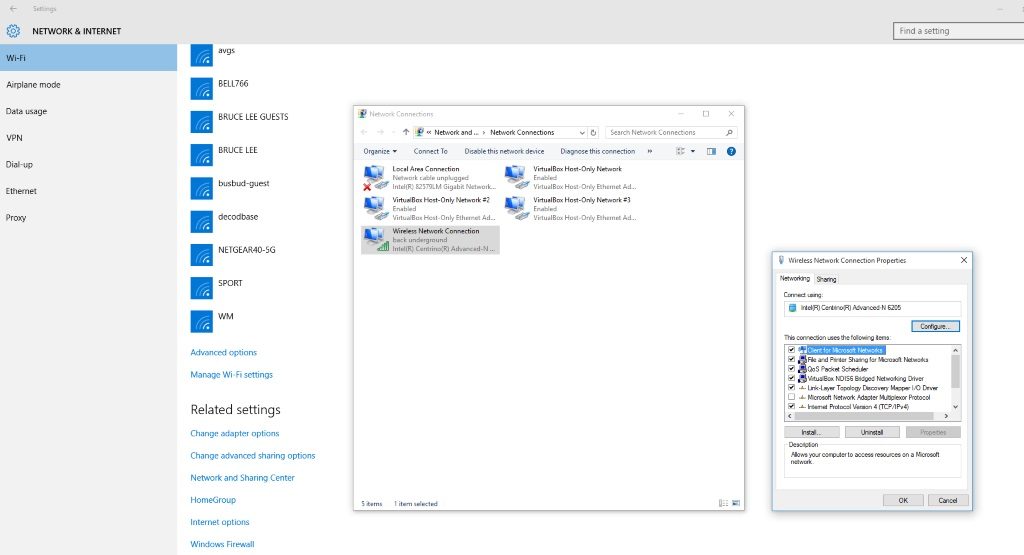

Configuration Dialogues:

As a developer my monitor resolution is 1920 x 1080 (or higher!). In Windows 10 no matter where I start I’m pretty much guaranteed that three clicks in I time travel back to Windows NT. Tiny, ugly, anti-responsive dialogues that require toothpick like clicking to change every day web developer configurations. Come on Microsoft, even Linux isn’t this ugly in 2015!

Hyper-V:

Hyper-V support in Vagrant! This is actually the main reason I switched. Hyper-V is Microsoft’s competition to Virtualbox. Conclusion? Don’t believe the hype.

I spent days trying to get Vagrant to provision a LAMP stack using Hyper-V. I even spent $159.82 CAD to upgrade from Windows 10 Home to Pro so that I could activate this feature.

Hours wasted. I failed. Or more specifically I succeeded and it sucked for web development as I know it. I went back to Virtualbox. On the plus side at least SMB shares work (with caveats!).

Here is a list of URLs for anyone who dares try this themselves. Maybe you’ll have better luck than me?

- Howto upgrade from Windows 10 Home to Windows 10 Pro

- Howto activate Hyper-V

- What is a Virtual Switch in Hyper-V?

- Running Ubuntu with DHCP on Hyper-V over WIFI

Hello World 10

I’m still a LAMP developer at heart, with all the Stockholm Syndrome that comes from making a living with PHP, but Microsoft is changing.

Most notably C# is now open source. In 2014 I worked a job where I coded C# and, well, I liked it. For desktop, for tablet, for command line, I actually think the .NET ecosystem is pretty great. For web? For backend? Absolutely not. That said, all things considered, I decided I could no longer simply put my fingers in my ears singing “Na na na na I can’t hear you!”

Yes, I understand the distinction between Libre and Open and at this point in my life I am willing to make the trade off. I think Microsoft is setting up for the next decade and by me switching my laptop I am making a bet.

For this to pay off Microsoft needs to accept that .NET is not the dominant web development platform and attract those developers anyways. If they make life easier for the eclectic ecosystem that is the *NIX backend, then mobile and desktop will follow.

But most of all, SSH out-of-the-box for fux sake!